GPT-5.5 reasoning and execution foundation

Better at handling messy but real research requests, from question decomposition, source retrieval, and evidence comparison to tables, reports, web pages, and visual assets.

Xuelab Agent-Nobel

Xuelab Agent-Nobel

Nobel Agent New VersionXue Lab Agent

Nobel Agent New VersionXue Lab Agent

Nobel Agent

Nobel Agent

Xue Lab OpenClaw Agent Update

This update moves the Xue Lab OpenClaw agent from a helper that answers questions toward a research partner that can actively advance work: clearer task decomposition, steadier tool coordination, smoother early-stage scientific scouting, and built-in GPT-Image-2 support for research figures, report illustrations, and mobile-readable cards.

This release turns several high-friction lab workflows into executable capabilities, spanning the model foundation, image generation, and mobile-readable presentation.

Better at handling messy but real research requests, from question decomposition, source retrieval, and evidence comparison to tables, reports, web pages, and visual assets.

Can generate retina science visuals, mechanism diagrams, talk covers, web hero images, and data-card illustrations directly for lab workflows.

Optimizes headers, type size, column width, spacing, and wrapping for three-column tables, gene cards, and research-window summaries on phones.

GPT-5.5 in Nobel

In Nobel, GPT-5.5 shows up as fewer prompt patches, steadier multi-step execution, better context carryover, and stronger coordination across code, web pages, documents, charts, and source material.

Can turn “research this RP gene and make it into a presentation page” into retrieval, evidence filtering, structured summaries, image generation, layout, and browser checks.

Designed for hypothesis scouting across genes, phenotypes, OCT windows, population differences, animal models, and possible mechanisms.

Writes pages, edits scripts, runs checks, fixes layouts, and cleans assets so ideas become usable files that can be opened, reused, and iterated.

Reads images, uses images, generates images, and places them into pages or reports so results are easier to understand in lab meetings and collaborations.

The scores below are organized from OpenAI's official GPT-5.5 launch page. Each benchmark is shown as a percentage-style score; longer bars indicate higher performance.

Data source: OpenAI official GPT-5.5 launch page. A dash indicates that the official table did not list a score for that model.

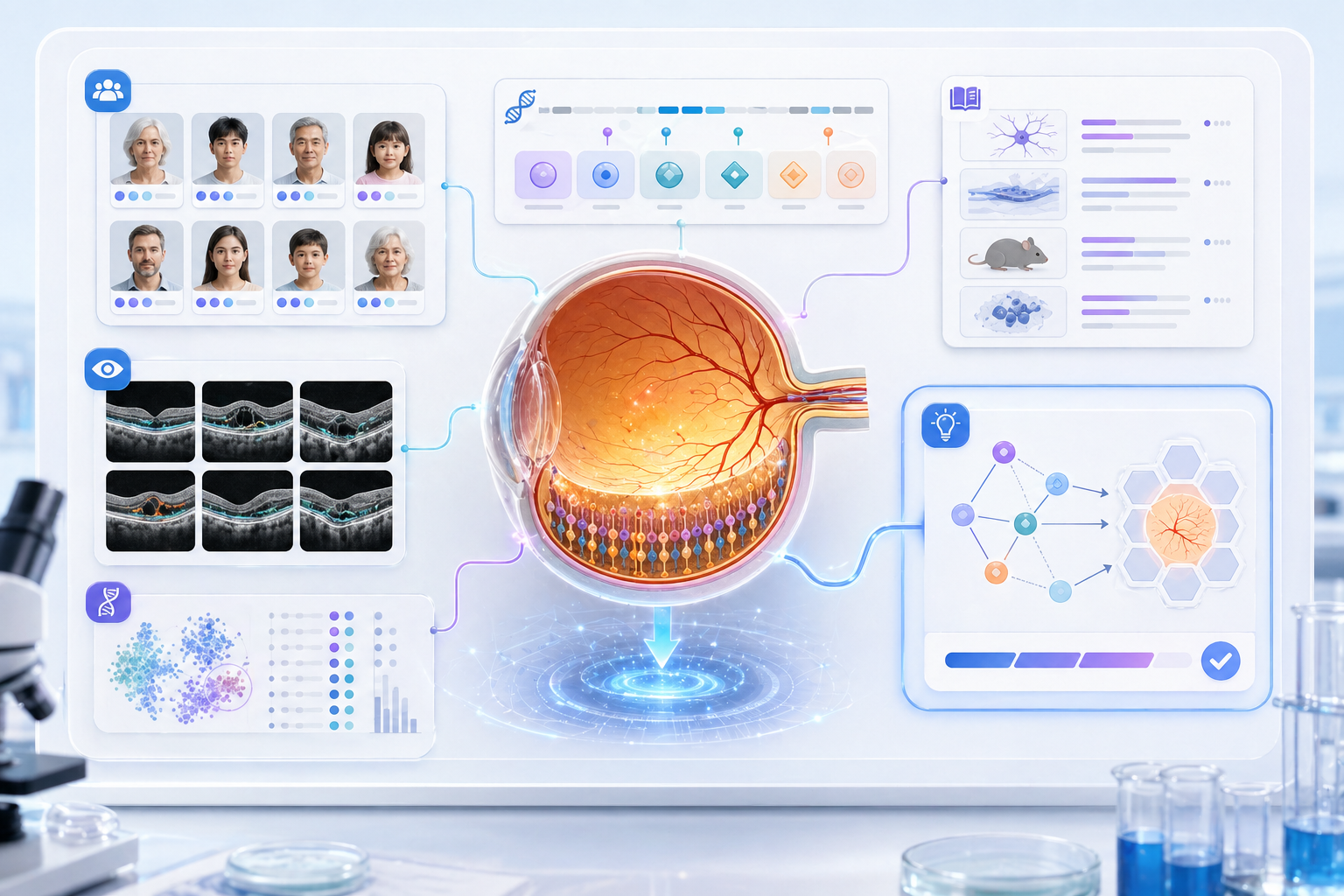

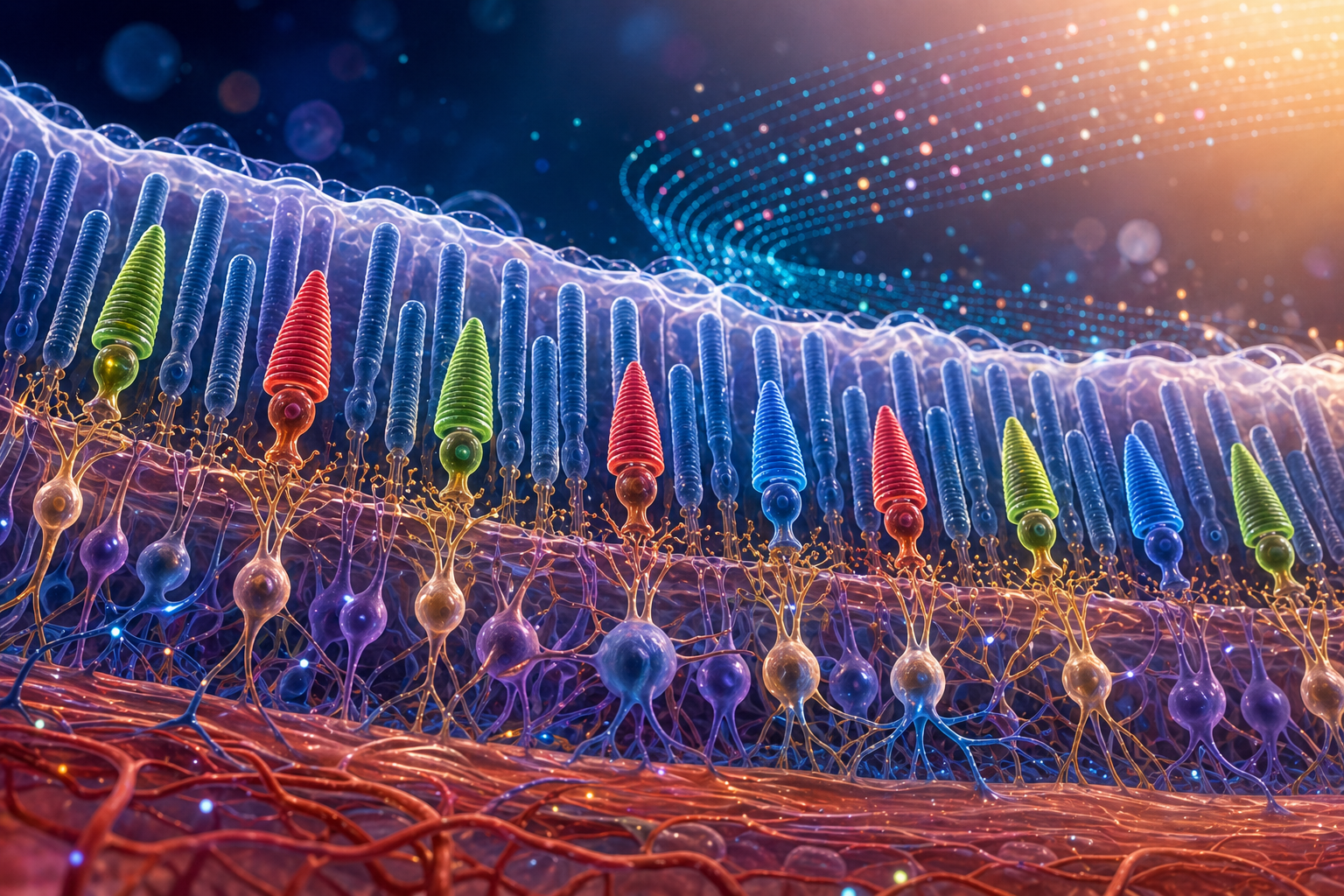

The two examples below are research visuals generated for and used directly in this page. In real workflows, Nobel can pass paper points, mechanism hypotheses, meeting style, and page placement into the image-generation step together.

Combines cohorts, OCT, genes, literature, and hypotheses into one visual, suitable for web sections or lab-meeting transition slides.

Explains the relationship between cones, rods, neural layers, and data particles, suitable for report covers, web hero sections, or science communication.

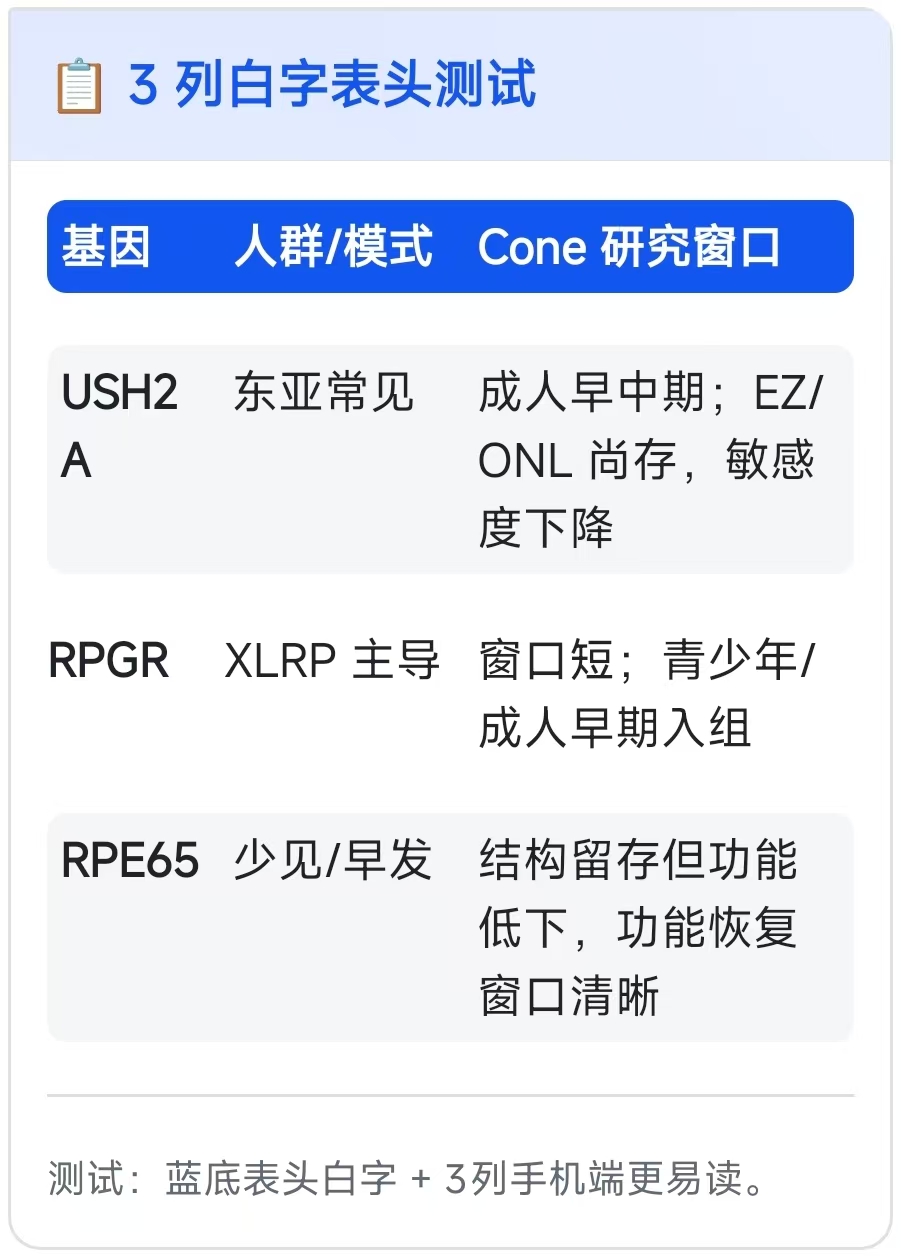

Mobile Card Table Readability

This screenshot captures a common problem: fitting a three-column table into a mobile card is only the first step. Readers still need to identify the gene, cohort or model, and cone-research window within a few seconds. Nobel now prioritizes mobile-first restructuring for this kind of table.

Use high-contrast headers while controlling radius and padding so the title area does not squeeze the content.

Keep gene-name columns stable, allow explanatory columns to wrap naturally, and split long judgments into shorter phrases.

Use subtle row grouping and clearer spacing so entities such as USH2A, RPGR, and RPE65 remain easy to scan.

When needed, convert a three-column table into one compact card per gene while preserving the table semantics.

After this upgrade, Nobel is best treated as a research-action orchestrator: it receives a question, advances evidence organization, and turns outputs into pages, charts, cards, and reports.

Start with a gene, disease, phenotype, cohort, or research window; Nobel first defines task boundaries and evidence types.

Convert literature, databases, clinical windows, and experimental models into comparable structured cards.

Use GPT-Image-2 to generate mechanism diagrams, science visuals, and page illustrations, then pair them with the written structure.

Deliver openable pages and check both desktop and mobile views, with special attention to tables, cards, and long text blocks.

The Nobel capability descriptions on this page are based on the Xuelab Agent update request; model information refers to OpenAI's official pages.